### Description

All our Windows build pipelines already uses cmake 3.26 except one

pipeline: QNN ARM64.

This PR does the same for Linux build pipelines.

### Motivation and Context

This change is related to #15704 .

### Description

- Update to QNN SDK 2.9.0 for QNN pipelines

- Temporarily disable warnings as errors for QNN Windows x64 pipeline

- Note that this pipeline did not previously run to completion. It also

currently does not run for pull requests.

### Motivation and Context

Need to update and test the latest available version of the QNN SDK.

Rename onnxruntime-Linux-CPU-2019 machine pool to

"onnxruntime-Ubuntu2004-AMD-CPU". The old one has an internal error and

stuck there. I cannot make any change to it. It has been like this for

more than 1 week. So I created a new pool with the same setting except

the name is different.

Also, move some android pipelines to

"onnxruntime-Linux-CPU-For-Android-CI" which uses a standard image from

https://github.com/actions/runner-images

### Description

* Update TensorRT 8.6 lib dependencies in dockerfile of TRT EP Perf

pipeline

* Avoid using `--allow_running_as_root` and build ORT with non-root user

### Motivation and Context

To fix the build issue on EP perf pipeline

Fixed

[AB#14615]

### Description

Add parameters to make some stages could use other run's intermediate

output.

### Motivation and Context

nuget workflow has 38 stages of 4 layers.

We had to run the whole workflow from begining to test one stage.

It could make life easier to run only one stage for testing.

like

### N.B.

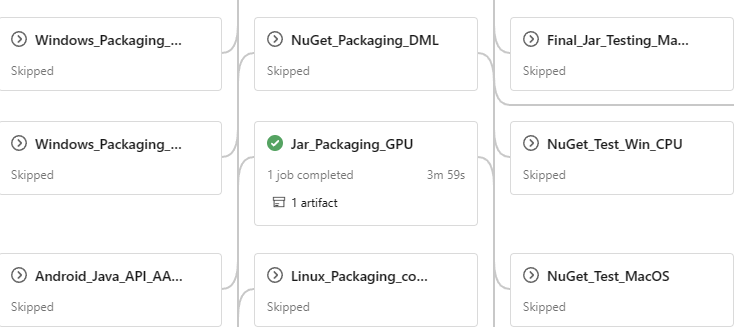

In this PR, Nuget_Test_Linux_CPU, Nuget_Test_LinuxGPU and

Jar_Packaging_GPU are enabled as the first step.

So I can start to move tests from Linux host to container

### Description

In 2021 we restricted onnx node test CI execution in range of opset

14-15 for ORT-TRT, which was the latest opset that TRT EP could support

Update this range to opset 14-17 to improve the ORT-TRT unit test

coverage, as [Nvidia announced that TRT 8.6 supported

opset17](https://github.com/onnx/onnx-tensorrt/blob/main/docs/operators.md)

### Description

* Reverting default TensorRT version to 8.5 as temporary fix

* Apart from that, this PR temporarily leaves this CI as a place to

validate user behavior that uses TRT 8.5 with latest ORT

### Context

* This CI pool equips 2xTesla M60 GPUs, which are no longer supported by

TensorRT 8.6.

* Currently, other CIs are using single-T4 VM but there's no VM with

2xT4 or other suitable dualGPU in the range.

* Once we decide which VM instance for this CI to migrate to, TRT8.6 can

be enabled on this CI

* According to

[Nvidia](https://docs.nvidia.com/deeplearning/tensorrt/release-notes/index.html):

* TensorRT 8.5.3 was the last release supporting NVIDIA Kepler (SM 3.x)

and NVIDIA Maxwell (SM 5.x) devices. *These devices are no longer

supported in TensorRT 8.6*. NVIDIA Pascal (SM 6.x) devices are

deprecated in TensorRT 8.6.

### Description

This PR resolves a part of non-critical comments from code review

comments in #14579.

- use `USE_JSEP` instead of `USE_JS` in build definition to make it less

ambiguous

- remove unused util functions from util.ts

- fix transpose.h

- other misc fixes

### Description

Update cuda 11.6 to 11.8 for Windows pipelines

This PR is just for Windows CUDA pipelines. It does include any change

for Linux pipelines or TensorRT pipelines

### Motivation and Context

It is a planned feature for the upcoming ONNX Runtime release.

### Description

This change introduced the following new components into ONNX Runtime

Web:

- JavaScript Execution Provider (JSEP)

- Asynchronized inferencing execution powered by Emscripten's Asyncify

- WebGPU backend implemented in TypeScript

- initial implementation of kernels:

- elementwise operators (22)

- binary operators (5)

- tensor: Shape, Reshape, Transpose, Gemm

- nn: Conv, {Global}Maxpool, {Global}AveragePool

Code need to be polished. still working on it.

## Q&A

What is JSEP?

> JSEP, aka JavaScript Execution Provider, is a new ONNXRuntime

execution provider that specifically works on Web environment

(browsers). JSEP allows JavaScript code to kick in from various places

when ONNX Runtime inferences a model.

Why JSEP?

> JSEP is a hybrid mode EP that contains both C/C++ and

TypeScript/JavaScript implementation. There are 2 strong reasons why we

introduces JSEP:

> 1. the C/C++ part helps JSEP to leverage ONNX Runtime's capabilities

as much as possible including graph transformer, optimizers and also the

capabilities to fallback to CPU EP. TypeScript/JavaScript helps JSEP to

develop and debug much easier in the browser for the kernel

implementation.

> 2. the requirement of asynchronized execution from JavaScript API (eg.

`buffer.mapAsync()`) makes it impossible to run `OrtRun()` in a

synchronized context (see "async problem" section below). This is done

by using Emscripten's Asyncify.

What is WebGPU?

> WebGPU is the new GPU API that available in browser. It's one of the

only 2 APIs that currently available to access the GPU from browser (the

other is WebGL).

> WebGPU is designed with more advanced and stronger features comparing

to WebGL and is potentially solution that offer the best GPU performance

for model inferencing that currently available.

What is the async problem and why we have the problem?

> The "async problem" is a problem that you cannot call an async

function in a synchronous context. Think about the following C++ code:

> ```c

> // C-style declarations (API)

> typedef void (*ON_COMPLETE)(PVOID state, DATA *data);

> void read_data_from_file(FILEHANDLE file, ON_COMPLETE on_complete);

>

> // implementation

> DATA * my_impl_read_data_from_file_sync(FILEHANDLE file) {

> // how to implement?

> }

> ```

> The answer is, it's impossible to implement this function. Usually we

try to find a sync version API, or launch a thread to call the async

function and sync-wait on the main thread. Unfortunately, in browser

environment, neither is possible.

>

> WebGPU does not offer any synchronized API for data downloading (GPU

to CPU). This is the only operation that MUST be async. As `OrtRun()`

will eventually call into DataTransfer for copy data from GPU to CPU,

and `OrtRun()` is a synchronized function, this cannot be done in normal

way.

What is Emscripten? How is the Asyncify feature resolved the problem?

> Emscripten is the C/C++ compiler for WebAssembly. It's what we use to

compile ORT and generates the WebAssembly artifacts which runs on

browsers.

>

> Asyncify is a [compiler

feature](https://emscripten.org/docs/porting/asyncify.html) that allows

calling async functions from a synchronized context. In short, it

generates code to unwind and rewind call stack to emulate async

execution. With this feature, we are able to call the async function

inside `OrtRun()` call.

## Design Overview

**Inter-op**

JSEP is doing pretty much same thing to just another EP. It exposes an

interface for inter-op with JavaScript, which is defined in

onnxruntime/wasm/js_internal_api.js:

```js

// init JSEP

Module["jsepInit"] = function (backend, alloc, free, copy, copyAsync, createKernel, releaseKernel, run) {

Module.jsepBackend = backend;

Module.jsepAlloc = alloc;

Module.jsepFree = free;

Module.jsepCopy = copy;

Module.jsepCopyAsync = copyAsync;

Module.jsepCreateKernel = createKernel;

Module.jsepReleaseKernel = releaseKernel;

Module.jsepRun = run;

};

```

This simple JavaScript snippet defines all language barrier level

functions that requires by JSEP to achieve implementing kernels and data

transfers using JavaScript inside ONNX Runtime:

- `jsepBackend`: assign the singleton object to webassembly module

- `jsepAlloc` and `jsepFree`: implementation of data transfer's Alloc()

and Free()

- `jsepCopy`: synchronized copy ( GPU to GPU, CPU to GPU)

- `jsepCopyAsync`: asynchronized copy ( GPU to CPU)

- `jsepCreateKernel` and `jsepReleaseKernel`: a corresponding object

that maintained in JS to match lifecycle of Kernel in ORT

- `jsepRun`: OpKernel::Compute() should call into this

The abstraction above allows to tie as little as possible connections

and dependencies between C/C++ and TypeScript/JavaScript.

**Resource Management**

Lifecycle of tensor data and kernels are managed by ORT(C/C++) but the

implementation are left to JavaScript. JavaScript code are responsible

to implement the callbacks correctly.

For WebGPU, the GPU data is managed by JavaScript using a singleton map

(tensot_data_id => GPUBuffer). GPU pipeline is managed as singleton.

Shaders are managed using a singletonmap (shader_key => gpu_program),

while shader_key is generated by cache_key (OP specific, including

attributes) and input shapes.

**about data transfer**

`js::DataTransfer::CopyTensor` implemented to call either synchronized

or asynchronized copy callback, depending on the destination is GPU or

not. Emscripten's macro `EM_ASYNC_JS` is used to wrap the async function

to be called in the synchronized context.

**run kernel in JS**

Kernel class constructor calls once `jsepCreateKernel()` with an

optional per-kernel specific serialization to pass attributes into

JavaScript.

`Compute()` are implemented in a way that a metadata serialization is

performed in a base class and JavaScript code can access the data using

the Emscripten specific builtin macro `EM_ASM_*`.

**disabled features**

memory pattern is force disabled, because the WebGPU data is not

presented by a general memory model (a buffer can be represented by

offset + size).

concurrent run support is disabled. WebGPU is stateful and it also has

async function call. To support concurrent run will significantly

increase the complexity and we don't get any real benefit from it.

**prefer channels last**

JSEP prefers channels last and returns `DataLayout::NHWC` in method

`GetPreferredLayout()`. This will let the graph transformers to

preprocess the graph into a channels last form so that a more optimized

WebGPU shader can be used.

**Testing code**

It's impossible to test JSEP directly because JSEP itself does not

contain any kernel implementation. However, it has the kernel

registration which need to work together with the corresponding

JavaScript code. There are unit tests that run onnx models from

JavaScript API.

---------

Co-authored-by: Scott McKay <skottmckay@gmail.com>

TensorRT will load/unload libraries as builder objects are created and

torn down. This will happen for

every single unit test, which leads to excessive test execution time due

to that overhead.

This overhead has steadily increased over the past few TensorRT versions

as the library objects get bigger leading to

8 hours to run all the unit tests. Nvidia suggests to keep a placeholder

builder object around to avoid this.

### Description

<!-- Describe your changes. -->

Integrate react native e2e test framework with detox.

https://wix.github.io/Detox/

Good build in CI:

https://dev.azure.com/onnxruntime/onnxruntime/_build/results?buildId=946695&view=results

### Motivation and Context

<!-- - Why is this change required? What problem does it solve?

- If it fixes an open issue, please link to the issue here. -->

Write cross-platform end-to-end tests in JavaScript.

Resolve flaky e2e tests in react native ci pipelines.

---------

Co-authored-by: rachguo <rachguo@rachguos-Mini.attlocal.net>

Co-authored-by: rachguo <rachguo@rachguos-Mac-mini.local>

### Description

- Updates the QNN Windows ARM64 pipeline to use a new image with Visual

Studio 2022 (updated from VS 2019)

- Creates a new gtest fixture class that skips tests for the QNN CPU

backend if we detect that the QNN CPU backend is not

available/functional. The current windows arm64 vm does not support any

QNN backend.

### Motivation and Context

Visual Studio 2022 adds support for native arm64 compilation. This

pipeline will help catch any build regressions on Windows ARM64 w/ VS

2022.

### Description

<!-- Describe your changes. -->

Add Swift Package Manager (SPM) support for ORT based on #14621

- uses the existing objective-c bindings

- some re-organization of the directory structure was required but the

contents of the files are unchanged, apart from adjustments due to file

movements

Add tool for updating ORT native pod used in the SPM package

Update CIs to use ORT native pod from build, and build/test using SPM

### Motivation and Context

<!-- - Why is this change required? What problem does it solve?

- If it fixes an open issue, please link to the issue here. -->

iOS developers are using SPM as much as cocoapods, so adding SPM means

both are catered for.

### Description

Bump ruff version in CI and fixed new lint errors.

- This change enables the flake8-implicit-str-concat rules which helps

detect unintended string concatenations:

https://beta.ruff.rs/docs/rules/#flake8-implicit-str-concat-isc

- Update gitignore to include common python files that we want to

exclude.

### Motivation and Context

Code quality

### Description

1. Disable XNNPack EP's tests in Windows CI pipeline

The EP code has a known problem(memory alignment), but the problem does

not impact the usages that we ship the code to. Now we only use XNNPack

EP in mobile apps and web usages. We have already pipelines to cover

these usages. We need to prioritize fixing the bugs found in these

pipelines, and there no resource to put on this Windows one. We can

re-enable the tests once we reached an agreement on how to fix the

memory alignment bug.

2. Delete anybuild.yml which was for an already deleted pipeline.

3. Move Windows CPU pipelines to AMD CPU machine pools which are

cheaper.

4. Disable some qdq/int8 model tests that will fail if the CPU doesn't

have Intel AVX512 8-bit instructions.

### Description

<!-- Describe your changes. -->

* Integrate TRT 8.6EA on relevant Linux/Windows/pkg pipelines

* Update onnx-tensorrt to 8.6

* Add new dockerfiles for TRT 8.6 and clean old ones

* Update

[CGManifest](https://github.com/microsoft/onnxruntime/tree/main/cgmanifests)

files and ort build deps version

* yml/script update

* Enable built-in TRT parser option on TRT related pipelines by default

* Exclude test TopKOperator.Top3ExplicitAxisInfinity out of TRT EP tests

(8.6-EA has issue with topk operator)

This change moves the DML CI pipeline to the A10 machines and fixes or

disables tests that were failing from this change.

- Max error rate threshold was increased for Image Tests

- Some failing batch tests were disabled

---------

Co-authored-by: Changming Sun <chasun@microsoft.com>

### Description

<!-- Describe your changes. -->

As title

### Motivation and Context

<!-- - Why is this change required? What problem does it solve?

- If it fixes an open issue, please link to the issue here. -->

https://github.com/microsoft/onnxruntime/issues/15110

---------

Co-authored-by: rachguo <rachguo@rachguos-Mac-mini.local>

Co-authored-by: rachguo <rachguo@rachguos-Mini.attlocal.net>

Co-authored-by: Scott McKay <skottmckay@gmail.com>

### Description

add script to validate generated NPM packages and publish it to

artifacts, so that release pipeline can use it.

once this PR is merged, I will update the NPM package release pipeline.

### Description

Implement Optional Type metadata support in the library.

Implement optional support in C# API along with metadata.

Implement Sequence, Map, Optional test data support

and test execution.

Prune tests and provide more details for failing tests in C# code.

Note, this PR does not enable running onnx test models in C++.

### Motivation and Context

Opset18 optional type support.

### Description

1. Move it to a separated pool that use the same image as [the public

hosted

pool](https://learn.microsoft.com/en-us/azure/devops/pipelines/agents/hosted?view=azure-devops&tabs=yaml).

Also, create a beta pool which contains the next version image of the

hosted pool, and add jobs in our post merge pipeline to test if the next

version image will break our CI. So, usually we will have at least one

week to prepare.

2. Change the cmake generator in use in our pipelines from "Ninja" to

"MingW Makefile", because the latest version of cmake doesn't work with

the latest version of Ninja. People who prefer Ninja could still use

ninja in their local build by passing "--cmake_generator ninja" to

[build.py](https://github.com/microsoft/onnxruntime/blob/main/tools/ci_build/build.py).

3. Delete eager mode CI pipeline.

### Motivation and Context

I need to update the software we have in our CI build machines, and I

need to resolve this incompatibility issue. In more detail, the build

error I hit was:

em++: error:

CMakeFilesonnxruntime_mlas_test.dirC_a_work1sonnxruntimetestmlasunittesttest_activation.cpp.o:

No such file or directory

("CMakeFilesonnxruntime_mlas_test.dirC_a_work1sonnxruntimetestmlasunittesttest_activation.cpp.o"

was expected to be an input file, based on the commandline arguments

provided)

After this PR we will deprecate python 3.7 support. The eager mode CI

pipeline is the last one that still use python 3.7. Then we can rework

the PR #10953 made by [fs-eire](https://github.com/fs-eire) last year.

Fixed

[AB#14435](https://aiinfra.visualstudio.com/6a833879-cd9b-44a4-a9de-adc2d818f13c/_workitems/edit/14435)

Add workflow to update Objective-C API docs. Remove the Objective-C API doc generation step from the packaging pipeline.

There are similar workflows for automatically updating other language API docs. This change enables this for Objective-C too.

Ensure that we build with a known version of NDK and are not surprised when the default version on the build machine changes.

A similar change was made for other Android build pipelines previously, but this one was missed.

### Description

1. The protoc package on nuget.org contains binaries for

Windows_x86/Windows_x64/Linux_x86/Linux_x64/MacOS_x64, which can cover

most use cases. Though it doesn't have binaries for AMR64, they are only

needed when we cross-compile for Intel CPUs on ARM CPUs. It is rare.

When you have such a need, you always can build protoc from source by

yourself and pass it to build.py as "--path_to_protoc_exe". Or if you

have security concerns that you don't want to use prebuilt binaries from

outside, you can do the same thing.

2. Remove GoogleTestAdapter related thing. That part of code is out of

maintain.

### Motivation and Context

As a follow-up of PR #15190.

### Description

Update mimalloc dependency.

### Motivation and Context

The latest release contains important fixes including memory leaks and

used by customers.

WindowsAI build failing due to deprecated .NET5 SDK missing in build

image

.NET5 was deprecated last year, and recently the build machine images

have been updated to not include this SDK.

Unblock failing builds by force insalling .NET5 SDK as part of the build

pipeline.

### Description

Upgrade remainding python to 3.11 removing 3.7

### Motivation and Context

<!-- - Why is this change required? What problem does it solve?

- If it fixes an open issue, please link to the issue here. -->

### Description

Update python package pipeline to support 3.11

### Motivation and Context

<!-- - Why is this change required? What problem does it solve?

- If it fixes an open issue, please link to the issue here. -->

### Description

In merge branch, the run only reads the cache generated in main build.

As a result, each run in merge branch will not upload new cache except

at the first time.

### Motivation and Context

1.Reduce the cache storage.

If there's some big changes, devs should trigger the specific builds

manually in https://dev.azure.com/onnxruntime/onnxruntime/_build. It

still reads own branch cache.

Temporarily remove Azure build check to unblock PR(s).

We need to investigate the sudden build failure and reenable.

Co-authored-by: Randy Shuai <rashuai@microsoft.com>