mirror of

https://github.com/saymrwulf/transformers.git

synced 2026-05-14 20:58:08 +00:00

german medbert model details (#8266)

* model details * Apply suggestions from code review Co-authored-by: Julien Chaumond <chaumond@gmail.com>

This commit is contained in:

parent

96baaafd34

commit

ddeecf08e6

1 changed files with 33 additions and 0 deletions

33

model_cards/smanjil/German-MedBERT/README.md

Normal file

33

model_cards/smanjil/German-MedBERT/README.md

Normal file

|

|

@ -0,0 +1,33 @@

|

|||

---

|

||||

language: de

|

||||

---

|

||||

|

||||

# German Medical BERT

|

||||

|

||||

This is a fine-tuned model on Medical domain for German language and based on German BERT.

|

||||

|

||||

## Overview

|

||||

**Language model:** bert-base-german-cased

|

||||

|

||||

**Language:** German

|

||||

|

||||

**Fine-tuning:** Medical articles (diseases, symptoms, therapies, etc..)

|

||||

|

||||

**Eval data:** NTS-ICD-10 dataset (Classification)

|

||||

|

||||

**Infrastructure:** Gogle Colab

|

||||

|

||||

|

||||

## Details

|

||||

- We fine-tuned using Pytorch with Huggingface library on Colab GPU.

|

||||

- With standard parameter settings for fine-tuning as mentioned in original BERT's paper.

|

||||

- Although had to train for upto 25 epochs for classification.

|

||||

|

||||

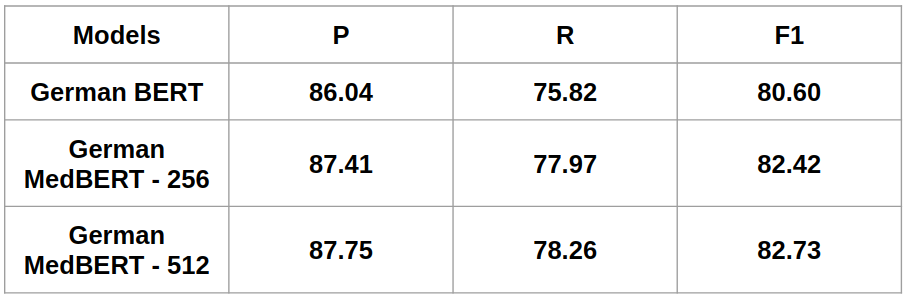

## Performance (Micro precision, recall and f1 score for multilabel code classification)

|

||||

|

||||

|

||||

## Author

|

||||

Manjil Shrestha: `shresthamanjil21 [at] gmail.com`

|

||||

|

||||

Get in touch:

|

||||

[LinkedIn](https://www.linkedin.com/in/manjil-shrestha-038527b4/)

|

||||

Loading…

Reference in a new issue