mirror of

https://github.com/saymrwulf/pytorch.git

synced 2026-05-15 21:00:47 +00:00

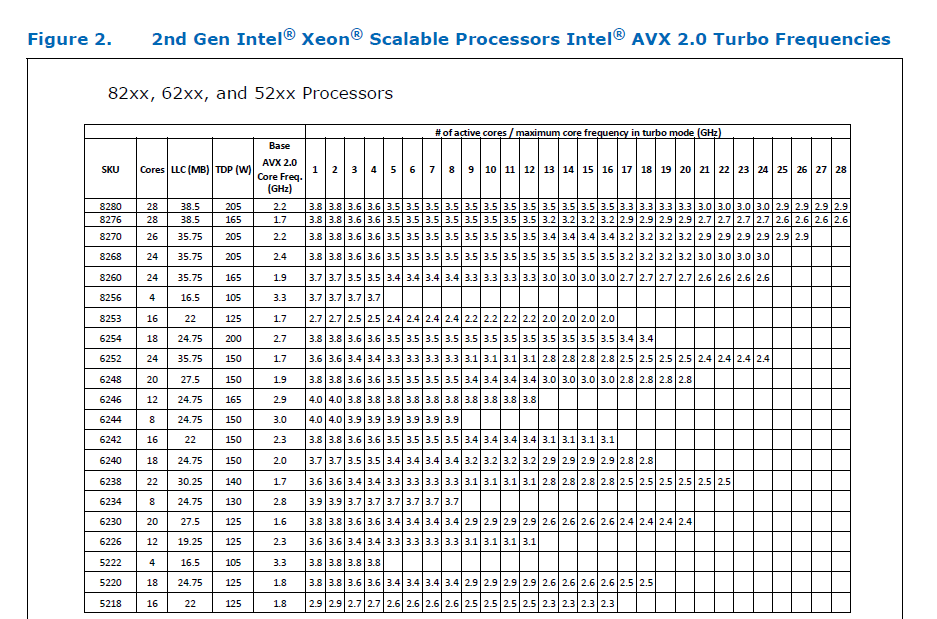

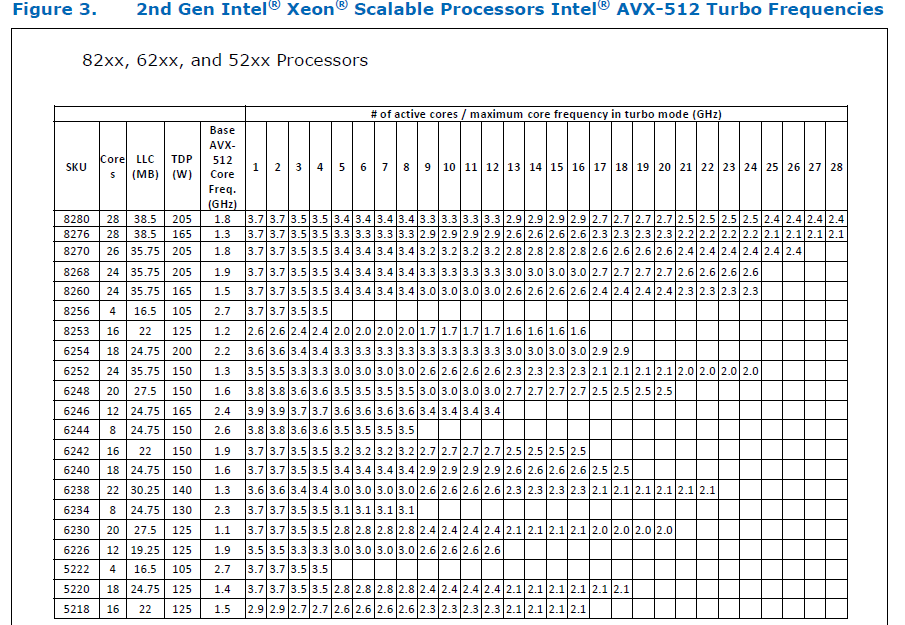

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/61903 ### Remaining Tasks - [ ] Collate results of benchmarks on two Intel Xeon machines (with & without CUDA, to check if CPU throttling causes issues with GPUs) - make graphs, including Roofline model plots (Intel Advisor can't make them with libgomp, though, but with Intel OpenMP). ### Summary 1. This draft PR produces binaries with with 3 types of ATen kernels - default, AVX2, AVX512 . Using the environment variable `ATEN_AVX512_256=TRUE` also results in 3 types of kernels, but the compiler can use 32 ymm registers for AVX2, instead of the default 16. ATen kernels for `CPU_CAPABILITY_AVX` have been removed. 2. `nansum` is not using AVX512 kernel right now, as it has poorer accuracy for Float16, than does AVX2 or DEFAULT, whose respective accuracies aren't very good either (#59415). It was more convenient to disable AVX512 dispatch for all dtypes of `nansum` for now. 3. On Windows , ATen Quantized AVX512 kernels are not being used, as quantization tests are flaky. If `--continue-through-failure` is used, then `test_compare_model_outputs_functional_static` fails. But if this test is skipped, `test_compare_model_outputs_conv_static` fails. If both these tests are skipped, then a third one fails. These are hard to debug right now due to not having access to a Windows machine with AVX512 support, so it was more convenient to disable AVX512 dispatch of all ATen Quantized kernels on Windows for now. 4. One test is currently being skipped - [test_lstm` in `quantization.bc](https://github.com/pytorch/pytorch/issues/59098) - It fails only on Cascade Lake machines, irrespective of the `ATEN_CPU_CAPABILITY` used, because FBGEMM uses `AVX512_VNNI` on machines that support it. The value of `reduce_range` should be used as `False` on such machines. The list of the changes is at https://gist.github.com/imaginary-person/4b4fda660534f0493bf9573d511a878d. Credits to ezyang for proposing `AVX512_256` - these use AVX2 intrinsics but benefit from 32 registers, instead of the 16 ymm registers that AVX2 uses. Credits to limo1996 for the initial proposal, and for optimizing `hsub_pd` & `hadd_pd`, which didn't have direct AVX512 equivalents, and are being used in some kernels. He also refactored `vec/functional.h` to remove duplicated code. Credits to quickwritereader for helping fix 4 failing complex multiplication & division tests. ### Testing 1. `vec_test_all_types` was modified to test basic AVX512 support, as tests already existed for AVX2. Only one test had to be modified, as it was hardcoded for AVX2. 2. `pytorch_linux_bionic_py3_8_gcc9_coverage_test1` & `pytorch_linux_bionic_py3_8_gcc9_coverage_test2` are now using `linux.2xlarge` instances, as they support AVX512. They were used for testing AVX512 kernels, as AVX512 kernels are being used by default in both of the CI checks. Windows CI checks had already been using machines with AVX512 support. ### Would the downclocking caused by AVX512 pose an issue? I think it's important to note that AVX2 causes downclocking as well, and the additional downclocking caused by AVX512 may not hamper performance on some Skylake machines & beyond, because of the double vector-size. I think that [this post with verifiable references is a must-read](https://community.intel.com/t5/Software-Tuning-Performance/Unexpected-power-vs-cores-profile-for-MKL-kernels-on-modern-Xeon/m-p/1133869/highlight/true#M6450). Also, AVX512 would _probably not_ hurt performance on a high-end machine, [but measurements are recommended](https://lemire.me/blog/2018/09/07/avx-512-when-and-how-to-use-these-new-instructions/). In case it does, `ATEN_AVX512_256=TRUE` can be used for building PyTorch, as AVX2 can then use 32 ymm registers instead of the default 16. [FBGEMM uses `AVX512_256` only on Xeon D processors](https://github.com/pytorch/FBGEMM/pull/209), which are said to have poor AVX512 performance. This [official data](https://www.intel.com/content/dam/www/public/us/en/documents/specification-updates/xeon-scalable-spec-update.pdf) is for the Intel Skylake family, and the first link helps understand its significance. Cascade Lake & Ice Lake SP Xeon processors are said to be even better when it comes to AVX512 performance. Here is the corresponding data for [Cascade Lake](https://cdrdv2.intel.com/v1/dl/getContent/338848) -   The corresponding data isn't publicly available for Intel Xeon SP 3rd gen (Ice Lake SP), but [Intel mentioned that the 3rd gen has frequency improvements pertaining to AVX512](https://newsroom.intel.com/wp-content/uploads/sites/11/2021/04/3rd-Gen-Intel-Xeon-Scalable-Platform-Press-Presentation-281884.pdf). Ice Lake SP machines also have 48 KB L1D caches, so that's another reason for AVX512 performance to be better on them. ### Is PyTorch always faster with AVX512? No, but then PyTorch is not always faster with AVX2 either. Please refer to #60202. The benefit from vectorization is apparent with with small tensors that fit in caches or in kernels that are more compute heavy. For instance, AVX512 or AVX2 would yield no benefit for adding two 64 MB tensors, but adding two 1 MB tensors would do well with AVX2, and even more so with AVX512. It seems that memory-bound computations, such as adding two 64 MB tensors can be slow with vectorization (depending upon the number of threads used), as the effects of downclocking can then be observed. Original pull request: https://github.com/pytorch/pytorch/pull/56992 Reviewed By: soulitzer Differential Revision: D29266289 Pulled By: ezyang fbshipit-source-id: 2d5e8d1c2307252f22423bbc14f136c67c3e6184

279 lines

12 KiB

CMake

279 lines

12 KiB

CMake

# This ill-named file does a number of things:

|

|

# - Installs Caffe2 header files (this has nothing to do with code generation)

|

|

# - Configures caffe2/core/macros.h

|

|

# - Creates an ATen target for its generated C++ files and adds it

|

|

# as a dependency

|

|

# - Reads build lists defined in build_variables.bzl

|

|

|

|

################################################################################

|

|

# Helper functions

|

|

################################################################################

|

|

|

|

function(filter_list output input)

|

|

unset(result)

|

|

foreach(filename ${${input}})

|

|

foreach(pattern ${ARGN})

|

|

if("${filename}" MATCHES "${pattern}")

|

|

list(APPEND result "${filename}")

|

|

endif()

|

|

endforeach()

|

|

endforeach()

|

|

set(${output} ${result} PARENT_SCOPE)

|

|

endfunction()

|

|

|

|

function(filter_list_exclude output input)

|

|

unset(result)

|

|

foreach(filename ${${input}})

|

|

foreach(pattern ${ARGN})

|

|

if(NOT "${filename}" MATCHES "${pattern}")

|

|

list(APPEND result "${filename}")

|

|

endif()

|

|

endforeach()

|

|

endforeach()

|

|

set(${output} ${result} PARENT_SCOPE)

|

|

endfunction()

|

|

|

|

################################################################################

|

|

|

|

# ---[ Write the macros file

|

|

configure_file(

|

|

${CMAKE_CURRENT_LIST_DIR}/../caffe2/core/macros.h.in

|

|

${CMAKE_BINARY_DIR}/caffe2/core/macros.h)

|

|

|

|

# ---[ Installing the header files

|

|

install(DIRECTORY ${CMAKE_CURRENT_LIST_DIR}/../caffe2

|

|

DESTINATION include

|

|

FILES_MATCHING PATTERN "*.h")

|

|

if(NOT INTERN_BUILD_ATEN_OPS)

|

|

install(DIRECTORY ${CMAKE_CURRENT_LIST_DIR}/../aten/src/ATen/core

|

|

DESTINATION include/ATen

|

|

FILES_MATCHING PATTERN "*.h")

|

|

endif()

|

|

install(FILES ${CMAKE_BINARY_DIR}/caffe2/core/macros.h

|

|

DESTINATION include/caffe2/core)

|

|

|

|

# ---[ ATen specific

|

|

if(INTERN_BUILD_ATEN_OPS)

|

|

if(MSVC)

|

|

set(OPT_FLAG "/fp:strict ")

|

|

else(MSVC)

|

|

set(OPT_FLAG "-O3 ")

|

|

if("${CMAKE_BUILD_TYPE}" MATCHES "Debug")

|

|

set(OPT_FLAG " ")

|

|

endif()

|

|

endif(MSVC)

|

|

|

|

if(NOT MSVC AND NOT "${CMAKE_C_COMPILER_ID}" MATCHES "Clang")

|

|

set_source_files_properties(${CMAKE_CURRENT_LIST_DIR}/../aten/src/ATen/MapAllocator.cpp PROPERTIES COMPILE_FLAGS "-fno-openmp")

|

|

endif()

|

|

|

|

file(GLOB cpu_kernel_cpp_in "${CMAKE_CURRENT_LIST_DIR}/../aten/src/ATen/native/cpu/*.cpp" "${CMAKE_CURRENT_LIST_DIR}/../aten/src/ATen/native/quantized/cpu/kernels/*.cpp")

|

|

|

|

list(APPEND CPU_CAPABILITY_NAMES "DEFAULT")

|

|

list(APPEND CPU_CAPABILITY_FLAGS "${OPT_FLAG}")

|

|

|

|

|

|

if(CXX_AVX512_FOUND)

|

|

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -DHAVE_AVX512_CPU_DEFINITION")

|

|

list(APPEND CPU_CAPABILITY_NAMES "AVX512")

|

|

if(MSVC)

|

|

list(APPEND CPU_CAPABILITY_FLAGS "${OPT_FLAG}/arch:AVX512")

|

|

else(MSVC)

|

|

list(APPEND CPU_CAPABILITY_FLAGS "${OPT_FLAG} -mavx512f -mavx512bw -mavx512vl -mavx512dq -mfma")

|

|

endif(MSVC)

|

|

endif(CXX_AVX512_FOUND)

|

|

|

|

if(CXX_AVX2_FOUND)

|

|

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -DHAVE_AVX2_CPU_DEFINITION")

|

|

|

|

# Some versions of GCC pessimistically split unaligned load and store

|

|

# instructions when using the default tuning. This is a bad choice on

|

|

# new Intel and AMD processors so we disable it when compiling with AVX2.

|

|

# See https://stackoverflow.com/questions/52626726/why-doesnt-gcc-resolve-mm256-loadu-pd-as-single-vmovupd#tab-top

|

|

check_cxx_compiler_flag("-mno-avx256-split-unaligned-load -mno-avx256-split-unaligned-store" COMPILER_SUPPORTS_NO_AVX256_SPLIT)

|

|

if(COMPILER_SUPPORTS_NO_AVX256_SPLIT)

|

|

set(CPU_NO_AVX256_SPLIT_FLAGS "-mno-avx256-split-unaligned-load -mno-avx256-split-unaligned-store")

|

|

endif(COMPILER_SUPPORTS_NO_AVX256_SPLIT)

|

|

|

|

list(APPEND CPU_CAPABILITY_NAMES "AVX2")

|

|

if(DEFINED ENV{ATEN_AVX512_256})

|

|

if($ENV{ATEN_AVX512_256} MATCHES "TRUE")

|

|

if(CXX_AVX512_FOUND)

|

|

message("-- ATen AVX2 kernels will use 32 ymm registers")

|

|

if(MSVC)

|

|

list(APPEND CPU_CAPABILITY_FLAGS "${OPT_FLAG}/arch:AVX512")

|

|

else(MSVC)

|

|

list(APPEND CPU_CAPABILITY_FLAGS "${OPT_FLAG} -march=native ${CPU_NO_AVX256_SPLIT_FLAGS}")

|

|

endif(MSVC)

|

|

endif(CXX_AVX512_FOUND)

|

|

endif()

|

|

else()

|

|

if(MSVC)

|

|

list(APPEND CPU_CAPABILITY_FLAGS "${OPT_FLAG}/arch:AVX2")

|

|

else(MSVC)

|

|

list(APPEND CPU_CAPABILITY_FLAGS "${OPT_FLAG} -mavx2 -mfma ${CPU_NO_AVX256_SPLIT_FLAGS}")

|

|

endif(MSVC)

|

|

endif()

|

|

endif(CXX_AVX2_FOUND)

|

|

|

|

if(CXX_VSX_FOUND)

|

|

SET(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -DHAVE_VSX_CPU_DEFINITION")

|

|

LIST(APPEND CPU_CAPABILITY_NAMES "VSX")

|

|

LIST(APPEND CPU_CAPABILITY_FLAGS "${OPT_FLAG} ${CXX_VSX_FLAGS}")

|

|

endif(CXX_VSX_FOUND)

|

|

|

|

list(LENGTH CPU_CAPABILITY_NAMES NUM_CPU_CAPABILITY_NAMES)

|

|

math(EXPR NUM_CPU_CAPABILITY_NAMES "${NUM_CPU_CAPABILITY_NAMES}-1")

|

|

|

|

# The sources list might get reordered later based on the capabilites.

|

|

# See NOTE [ Linking AVX and non-AVX files ]

|

|

foreach(i RANGE ${NUM_CPU_CAPABILITY_NAMES})

|

|

foreach(IMPL ${cpu_kernel_cpp_in})

|

|

string(REPLACE "${CMAKE_CURRENT_LIST_DIR}/../aten/src/ATen/" "" NAME ${IMPL})

|

|

list(GET CPU_CAPABILITY_NAMES ${i} CPU_CAPABILITY)

|

|

set(NEW_IMPL ${CMAKE_BINARY_DIR}/aten/src/ATen/${NAME}.${CPU_CAPABILITY}.cpp)

|

|

configure_file(${IMPL} ${NEW_IMPL} COPYONLY)

|

|

set(cpu_kernel_cpp ${NEW_IMPL} ${cpu_kernel_cpp}) # Create list of copies

|

|

list(GET CPU_CAPABILITY_FLAGS ${i} FLAGS)

|

|

if(MSVC)

|

|

set(EXTRA_FLAGS "/DCPU_CAPABILITY=${CPU_CAPABILITY} /DCPU_CAPABILITY_${CPU_CAPABILITY}")

|

|

else(MSVC)

|

|

set(EXTRA_FLAGS "-DCPU_CAPABILITY=${CPU_CAPABILITY} -DCPU_CAPABILITY_${CPU_CAPABILITY}")

|

|

endif(MSVC)

|

|

# Disable certain warnings for GCC-9.X

|

|

if(CMAKE_COMPILER_IS_GNUCXX AND (CMAKE_CXX_COMPILER_VERSION VERSION_GREATER 9.0.0))

|

|

if(("${NAME}" STREQUAL "native/cpu/GridSamplerKernel.cpp") AND ("${CPU_CAPABILITY}" STREQUAL "DEFAULT"))

|

|

# See https://github.com/pytorch/pytorch/issues/38855

|

|

set(EXTRA_FLAGS "${EXTRA_FLAGS} -Wno-uninitialized")

|

|

endif()

|

|

if("${NAME}" STREQUAL "native/quantized/cpu/kernels/QuantizedOpKernels.cpp")

|

|

# See https://github.com/pytorch/pytorch/issues/38854

|

|

set(EXTRA_FLAGS "${EXTRA_FLAGS} -Wno-deprecated-copy")

|

|

endif()

|

|

endif()

|

|

set_source_files_properties(${NEW_IMPL} PROPERTIES COMPILE_FLAGS "${FLAGS} ${EXTRA_FLAGS}")

|

|

endforeach()

|

|

endforeach()

|

|

list(APPEND ATen_CPU_SRCS ${cpu_kernel_cpp})

|

|

|

|

file(GLOB_RECURSE all_python "${CMAKE_CURRENT_LIST_DIR}/../tools/codegen/*.py")

|

|

|

|

set(GEN_ROCM_FLAG)

|

|

if(USE_ROCM)

|

|

set(GEN_ROCM_FLAG --rocm)

|

|

endif()

|

|

|

|

set(CUSTOM_BUILD_FLAGS)

|

|

if(INTERN_BUILD_MOBILE)

|

|

if(USE_VULKAN)

|

|

list(APPEND CUSTOM_BUILD_FLAGS --backend_whitelist CPU QuantizedCPU Vulkan)

|

|

else()

|

|

list(APPEND CUSTOM_BUILD_FLAGS --backend_whitelist CPU QuantizedCPU)

|

|

endif()

|

|

endif()

|

|

|

|

if(SELECTED_OP_LIST)

|

|

# With static dispatch we can omit the OP_DEPENDENCY flag. It will not calculate the transitive closure

|

|

# of used ops. It only needs to register used root ops.

|

|

if(NOT STATIC_DISPATCH_BACKEND AND NOT OP_DEPENDENCY)

|

|

message(WARNING

|

|

"For custom build with dynamic dispatch you have to provide the dependency graph of PyTorch operators.\n"

|

|

"Switching to STATIC_DISPATCH_BACKEND=CPU. If you run into problems with static dispatch and still want"

|

|

" to use selective build with dynamic dispatch, please try:\n"

|

|

"1. Run the static analysis tool to generate the dependency graph, e.g.:\n"

|

|

" LLVM_DIR=/usr ANALYZE_TORCH=1 tools/code_analyzer/build.sh\n"

|

|

"2. Run the custom build with the OP_DEPENDENCY option pointing to the generated dependency graph, e.g.:\n"

|

|

" scripts/build_android.sh -DSELECTED_OP_LIST=<op_list.yaml> -DOP_DEPENDENCY=<dependency_graph.yaml>\n"

|

|

)

|

|

set(STATIC_DISPATCH_BACKEND CPU)

|

|

else()

|

|

execute_process(

|

|

COMMAND

|

|

"${PYTHON_EXECUTABLE}" ${CMAKE_CURRENT_LIST_DIR}/../tools/code_analyzer/gen_op_registration_allowlist.py

|

|

--op-dependency "${OP_DEPENDENCY}"

|

|

--root-ops "${SELECTED_OP_LIST}"

|

|

OUTPUT_VARIABLE OP_REGISTRATION_WHITELIST

|

|

)

|

|

separate_arguments(OP_REGISTRATION_WHITELIST)

|

|

message(STATUS "Custom build with op registration whitelist: ${OP_REGISTRATION_WHITELIST}")

|

|

list(APPEND CUSTOM_BUILD_FLAGS

|

|

--force_schema_registration

|

|

--op_registration_whitelist ${OP_REGISTRATION_WHITELIST})

|

|

endif()

|

|

endif()

|

|

|

|

if(STATIC_DISPATCH_BACKEND)

|

|

message(STATUS "Custom build with static dispatch backend: ${STATIC_DISPATCH_BACKEND}")

|

|

list(APPEND CUSTOM_BUILD_FLAGS

|

|

--static_dispatch_backend ${STATIC_DISPATCH_BACKEND})

|

|

endif()

|

|

|

|

set(GEN_COMMAND

|

|

"${PYTHON_EXECUTABLE}" -m tools.codegen.gen

|

|

--source-path ${CMAKE_CURRENT_LIST_DIR}/../aten/src/ATen

|

|

--install_dir ${CMAKE_BINARY_DIR}/aten/src/ATen

|

|

${GEN_ROCM_FLAG}

|

|

${CUSTOM_BUILD_FLAGS}

|

|

${GEN_VULKAN_FLAGS}

|

|

)

|

|

|

|

execute_process(

|

|

COMMAND ${GEN_COMMAND}

|

|

--output-dependencies ${CMAKE_BINARY_DIR}/aten/src/ATen/generated_cpp.txt

|

|

RESULT_VARIABLE RETURN_VALUE

|

|

WORKING_DIRECTORY ${CMAKE_CURRENT_LIST_DIR}/..

|

|

)

|

|

if(NOT RETURN_VALUE EQUAL 0)

|

|

message(STATUS ${generated_cpp})

|

|

message(FATAL_ERROR "Failed to get generated_cpp list")

|

|

endif()

|

|

# FIXME: the file/variable name lists cpp, but these list both cpp and .h files

|

|

file(READ ${CMAKE_BINARY_DIR}/aten/src/ATen/generated_cpp.txt generated_cpp)

|

|

file(READ ${CMAKE_BINARY_DIR}/aten/src/ATen/generated_cpp.txt-cuda cuda_generated_cpp)

|

|

file(READ ${CMAKE_BINARY_DIR}/aten/src/ATen/generated_cpp.txt-core core_generated_cpp)

|

|

|

|

file(GLOB_RECURSE all_templates "${CMAKE_CURRENT_LIST_DIR}/../aten/src/ATen/templates/*")

|

|

|

|

file(MAKE_DIRECTORY ${CMAKE_BINARY_DIR}/aten/src/ATen)

|

|

file(MAKE_DIRECTORY ${CMAKE_BINARY_DIR}/aten/src/ATen/core)

|

|

|

|

add_custom_command(OUTPUT ${generated_cpp} ${cuda_generated_cpp} ${core_generated_cpp}

|

|

COMMAND ${GEN_COMMAND}

|

|

DEPENDS ${all_python} ${all_templates}

|

|

${CMAKE_CURRENT_LIST_DIR}/../aten/src/ATen/native/native_functions.yaml

|

|

WORKING_DIRECTORY ${CMAKE_CURRENT_LIST_DIR}/..

|

|

)

|

|

|

|

# Generated headers used from a CUDA (.cu) file are

|

|

# not tracked correctly in CMake. We make the libATen.so depend explicitly

|

|

# on building the generated ATen files to workaround.

|

|

add_custom_target(ATEN_CPU_FILES_GEN_TARGET DEPENDS ${generated_cpp} ${core_generated_cpp})

|

|

add_custom_target(ATEN_CUDA_FILES_GEN_TARGET DEPENDS ${cuda_generated_cpp})

|

|

add_library(ATEN_CPU_FILES_GEN_LIB INTERFACE)

|

|

add_library(ATEN_CUDA_FILES_GEN_LIB INTERFACE)

|

|

add_dependencies(ATEN_CPU_FILES_GEN_LIB ATEN_CPU_FILES_GEN_TARGET)

|

|

add_dependencies(ATEN_CUDA_FILES_GEN_LIB ATEN_CUDA_FILES_GEN_TARGET)

|

|

endif()

|

|

|

|

function(append_filelist name outputvar)

|

|

set(_rootdir "${${CMAKE_PROJECT_NAME}_SOURCE_DIR}/")

|

|

# configure_file adds its input to the list of CMAKE_RERUN dependencies

|

|

configure_file(

|

|

${PROJECT_SOURCE_DIR}/tools/build_variables.bzl

|

|

${PROJECT_BINARY_DIR}/caffe2/build_variables.bzl)

|

|

execute_process(

|

|

COMMAND "${PYTHON_EXECUTABLE}" -c

|

|

"exec(open('${PROJECT_SOURCE_DIR}/tools/build_variables.bzl').read());print(';'.join(['${_rootdir}' + x for x in ${name}]))"

|

|

WORKING_DIRECTORY "${_rootdir}"

|

|

RESULT_VARIABLE _retval

|

|

OUTPUT_VARIABLE _tempvar)

|

|

if(NOT _retval EQUAL 0)

|

|

message(FATAL_ERROR "Failed to fetch filelist ${name} from build_variables.bzl")

|

|

endif()

|

|

string(REPLACE "\n" "" _tempvar "${_tempvar}")

|

|

list(APPEND ${outputvar} ${_tempvar})

|

|

set(${outputvar} "${${outputvar}}" PARENT_SCOPE)

|

|

endfunction()

|

|

|

|

set(NUM_CPU_CAPABILITY_NAMES ${NUM_CPU_CAPABILITY_NAMES} PARENT_SCOPE)

|

|

set(CPU_CAPABILITY_FLAGS ${CPU_CAPABILITY_FLAGS} PARENT_SCOPE)

|