mirror of

https://github.com/saymrwulf/pytorch.git

synced 2026-05-14 20:57:59 +00:00

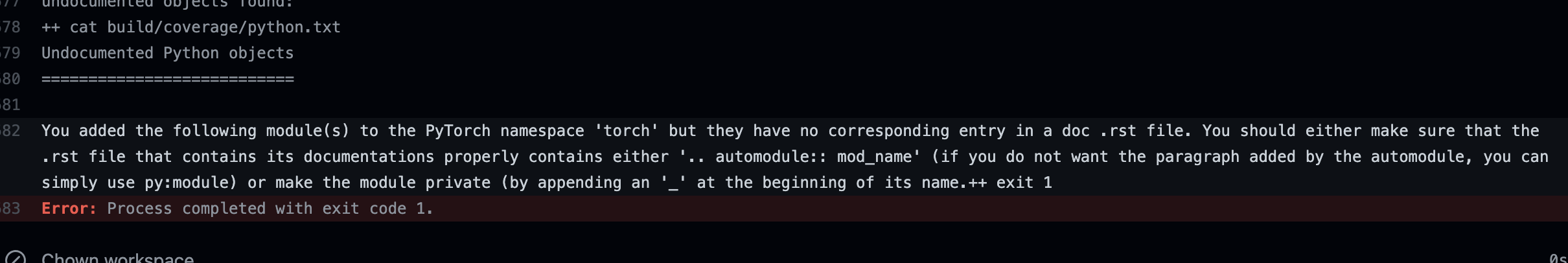

Summary: Add check to make sure we do not add new submodules without documenting them in an rst file. This is especially important because our doc coverage only runs for modules that are properly listed. temporarily removed "torch" from the list to make sure the failure in CI looks as expected. EDIT: fixed now This is what a CI failure looks like for the top level torch module as an example:  Pull Request resolved: https://github.com/pytorch/pytorch/pull/67440 Reviewed By: jbschlosser Differential Revision: D32005310 Pulled By: albanD fbshipit-source-id: 05cb2abc2472ea4f71f7dc5c55d021db32146928 |

||

|---|---|---|

| .. | ||

| _static | ||

| _templates | ||

| community | ||

| elastic | ||

| notes | ||

| rpc | ||

| scripts | ||

| __config__.rst | ||

| amp.rst | ||

| autograd.rst | ||

| backends.rst | ||

| benchmark_utils.rst | ||

| bottleneck.rst | ||

| checkpoint.rst | ||

| complex_numbers.rst | ||

| conf.py | ||

| cpp_extension.rst | ||

| cpp_index.rst | ||

| cuda.rst | ||

| cudnn_persistent_rnn.rst | ||

| cudnn_rnn_determinism.rst | ||

| data.rst | ||

| ddp_comm_hooks.rst | ||

| distributed.algorithms.join.rst | ||

| distributed.elastic.rst | ||

| distributed.optim.rst | ||

| distributed.rst | ||

| distributions.rst | ||

| dlpack.rst | ||

| docutils.conf | ||

| fft.rst | ||

| futures.rst | ||

| fx.rst | ||

| hub.rst | ||

| index.rst | ||

| jit.rst | ||

| jit_builtin_functions.rst | ||

| jit_language_reference.rst | ||

| jit_language_reference_v2.rst | ||

| jit_python_reference.rst | ||

| jit_unsupported.rst | ||

| linalg.rst | ||

| math-quantizer-equation.png | ||

| mobile_optimizer.rst | ||

| model_zoo.rst | ||

| multiprocessing.rst | ||

| name_inference.rst | ||

| named_tensor.rst | ||

| nn.functional.rst | ||

| nn.init.rst | ||

| nn.rst | ||

| onnx.rst | ||

| optim.rst | ||

| package.rst | ||

| pipeline.rst | ||

| profiler.rst | ||

| quantization-support.rst | ||

| quantization.rst | ||

| random.rst | ||

| rpc.rst | ||

| sparse.rst | ||

| special.rst | ||

| storage.rst | ||

| tensor_attributes.rst | ||

| tensor_view.rst | ||

| tensorboard.rst | ||

| tensors.rst | ||

| testing.rst | ||

| torch.ao.ns._numeric_suite.rst | ||

| torch.ao.ns._numeric_suite_fx.rst | ||

| torch.overrides.rst | ||

| torch.rst | ||

| type_info.rst | ||